✨ AI Summary

- Artificial intelligence has made significant progress but still faces the challenge of "hallucination," where AI systems confidently provide incorrect or outdated information, impacting trust.

- Retrieval-augmented generation (RAG) addresses this by combining data retrieval with response generation, ensuring accuracy and timeliness.

- RAG enhances AI systems' reliability, particularly in dynamic industries like DeFi, by providing real-time information for responses.

- RAG systems are increasingly adopted in customer support, finance, healthcare, and enterprise knowledge management, offering precise and up-to-date solutions.

- While RAG introduces complexities like data quality reliance and increased response time, its benefits in accuracy and reliability outweigh the challenges.

Artificial intelligence has come a long way. It can write, summarize, and even reason. But there is one critical problem that still holds it back, “hallucination.” AI systems can sound confident while being completely wrong. They may generate outdated answers, cite incorrect facts, or miss recent updates entirely.

For businesses, this goes beyond a technical limitation and directly impacts trust. That is where RAG in AI models comes in. Retrieval-augmented generation (RAG) is not a replacement for AI models. It is what makes them reliable. In this blog, you will learn what a RAG model is, how it works step by step, why it solves the hallucination problem, and how it is becoming the foundation for building accurate and trustworthy AI systems.

The Problem: Why AI Alone Cannot Be Trusted

Let’s take a real DeFi scenario.

A user asks an AI, “What is the best leverage to use on BTC right now?. A standard model might confidently respond, “Use 10x leverage to maximize gains”. It sounds helpful. It sounds confident. But it is incomplete and potentially dangerous.

Why?

Because the AI is not aware of real-time conditions, such as:

- Current market volatility

- Funding rates

- Liquidity depth

- Immediate risk of liquidation

This happens because of something called a “knowledge cutoff”. AI models, such as LLMs, are trained on data up to a certain point. Beyond that, they do not truly “know” what is happening right now. They generate answers based on patterns rather than live data. The result: The user follows the advice, enters a leveraged position, and gets liquidated within minutes.

Why This Matters?

In DeFi, where every action involves real money, wrong guidance leads to financial loss → lack of context leads to poor decisions → overconfidence in AI creates false trust.

These are not rare situations. They happen every day. RAG solves this by adding real-time retrieval to AI systems. Instead of guessing, the AI fetches current market data, protocol conditions, and relevant context before responding. This is what makes AI not just intelligent but reliable in real financial environments like DeFi.

What Is Retrieval-Augmented Generation (RAG)

At its core, retrieval-augmented generation (RAG) is a framework that combines two powerful capabilities.

- Retrieving relevant information from external sources

- Generating contextual responses using that information

Instead of guessing, the system first searches for the most relevant data and then uses it to construct an answer. This approach significantly reduces hallucinations and improves factual accuracy, especially in environments where information changes frequently.

It also enables AI systems to stay aligned with real-time updates without requiring constant retraining, making them far more adaptable in dynamic industries. As a result, businesses can rely on RAG-powered systems for critical use cases where both accuracy and timeliness are essential.

Still trusting AI that guesses instead of verifying? Discover how RAG changes the game.

RAG vs Traditional AI Approaches

AI has become incredibly good at answering questions, but accuracy remains its biggest weakness.

| Aspect | Traditional AI Systems | RAG Systems |

|---|---|---|

| Core Approach | Relies on pre-trained knowledge | Combines retrieval with generation |

| Data Source | Static training data | Dynamic + external data sources |

| Accuracy | Moderate, can generate incorrect facts | High, grounded in real-time data |

| Hallucination Risk | Higher | Significantly reduced |

| Use Case Strength | Creative writing, brainstorming | Fact-based, domain-specific tasks |

| Real-Time Updates | Not available without retraining | Available through the retrieval layer |

| Context Awareness | Limited to training data | Strong, based on the retrieved context |

| Reliability | Inconsistent for critical tasks | High reliability for business use |

| Industry Adoption | General-purpose applications | Rapid adoption in high-accuracy industries |

| Example Use Cases | Content generation, casual Q&A | Customer support, finance, healthcare |

Traditional AI is great for generating ideas, but when accuracy matters, RAG systems become essential. This is why industries that depend on precise, up-to-date information are increasingly adopting RAG in AI as a standard approach.

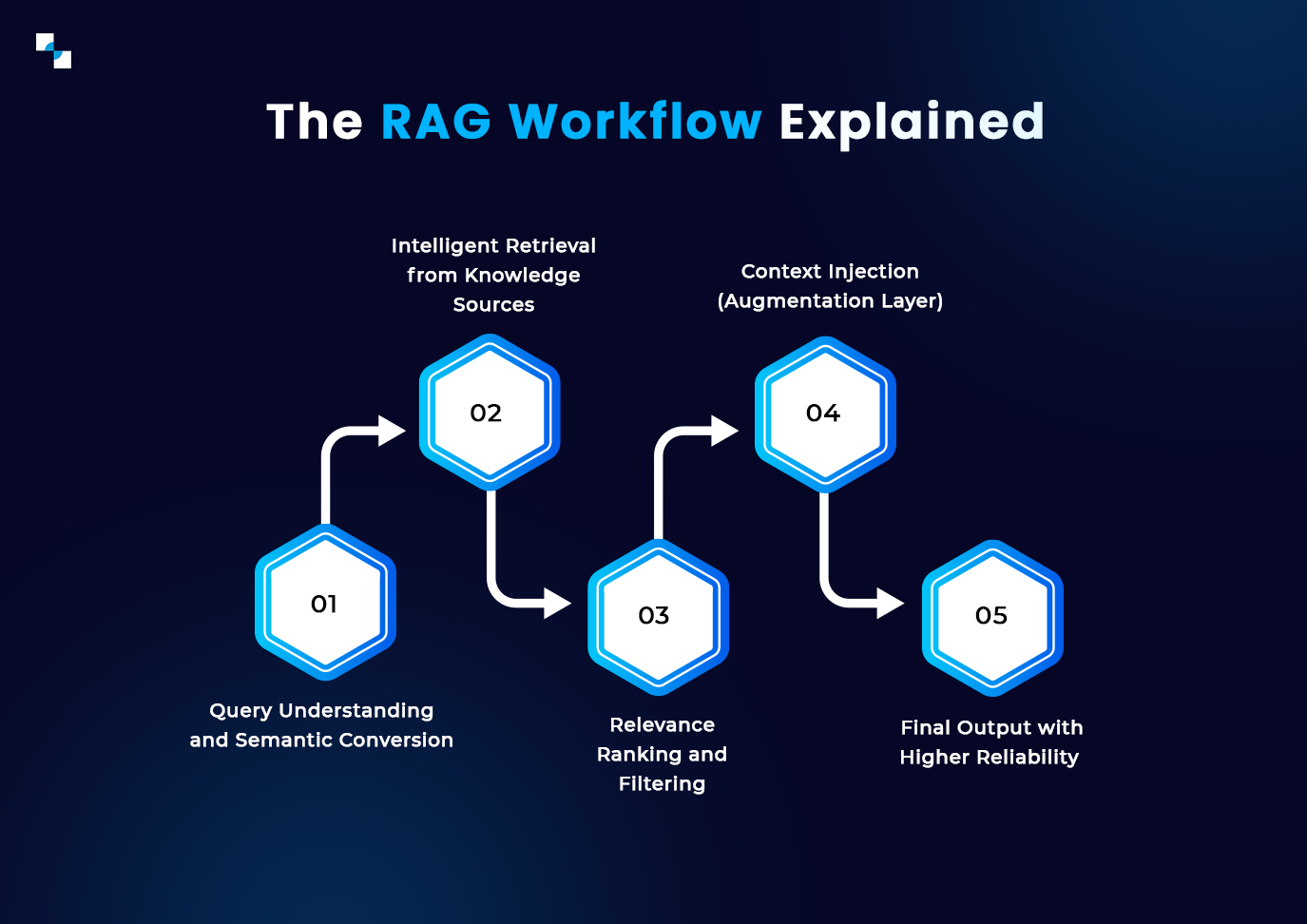

How RAG Works in Practice?

To understand the mechanism, consider a simple interaction. A user asks: “What are the latest compliance requirements for crypto platforms?”

Instead of relying solely on training data, a RAG system follows a structured pipeline that ensures accuracy and relevance.

- Query Understanding and Semantic Conversion

The system first interprets the user’s question and converts it into a semantic representation.

This allows the model to understand intent, not just keywords, ensuring better alignment with the actual query.

- Intelligent Retrieval from Knowledge Sources

The system searches across connected data sources such as

- Regulatory databases

- Compliance documents

- Industry reports

- Internal knowledge bases

It uses semantic search to find the most relevant information rather than exact keyword matches.

- Relevance Ranking and Filtering

Not all retrieved data is useful. The system ranks the results based on context and relevance. Only the most meaningful and high-quality information is selected for the next step.

- Context Injection (Augmentation Layer)

The selected data is then injected into the model’s input context. This step ensures that the AI has access to the most recent and relevant information before generating a response.

- Response Generation

The language model processes both.

- Its pre-trained knowledge

- The retrieved real-time data.

It then generates a response that is grounded in factual information and tailored to the user’s query.

- Final Output with Higher Reliability

The user receives an answer that is

- Context-aware

- Up-to-date

- Factually accurate

- Easy to understand

This pipeline ensures that the output is not only fluent but also reliable. It transforms AI from a system that predicts answers into one that retrieves, verifies, and then responds, making it far more suitable for real-world and business-critical applications.

Core Architecture Behind RAG Systems

A RAG system is not just a model. It is a pipeline made up of multiple components working together.

- Knowledge Layer: This includes all the data sources, such as documents, APIs, and structured databases.

- Retrieval System: This layer searches for relevant information using techniques like semantic search and vector similarity.

- Context Layer: The retrieved information is structured and injected into the model’s input.

- Generation Model: The AI system generates a response using both its training and the retrieved context.

Understanding this architecture is essential for anyone exploring RAG model implementation in real-world products.

Real-World Applications of RAG

RAG is already being used across multiple industries, delivering measurable impact.

- Customer Support Systems

One of the most effective use cases is RAG for customer support. Instead of generic responses, AI systems can now:

- Pull answers from updated documentation

- Reference current policies

- Provide accurate troubleshooting steps

This reduces support tickets, improves resolution time, and enhances user satisfaction.

- Enterprise Knowledge Management

Organizations use RAG to build internal knowledge systems where employees can query company data in natural language. This eliminates the need to search across multiple tools and improves productivity significantly.

- Financial and DeFi Platforms

In complex ecosystems like DeFi, users struggle with onboarding, gas fees, and transaction logic. RAG in DeFi enables platforms to provide contextual guidance, helping users understand processes in real time.

- Healthcare and Legal Systems

In high-stakes environments, accuracy is critical. RAG ensures that responses are grounded in verified and up-to-date information, reducing risk and improving outcomes.

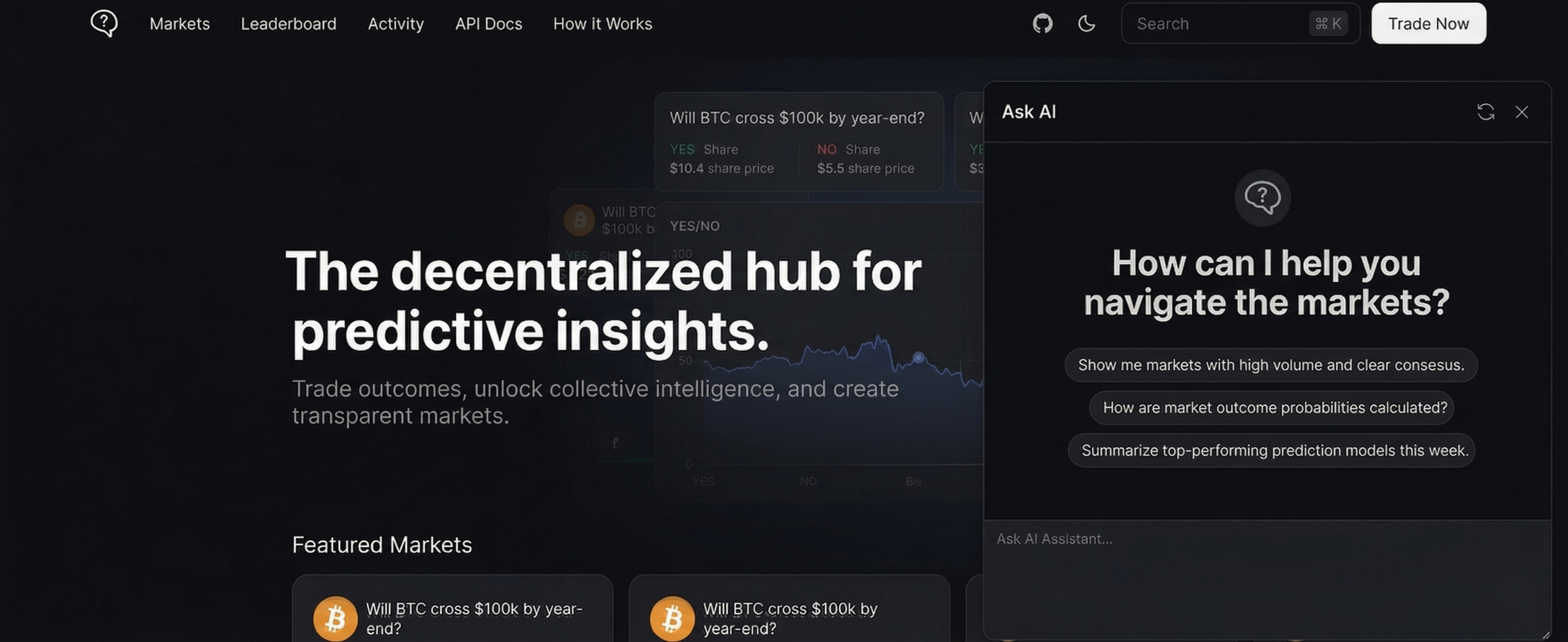

Integrating RAG into your platform does not require deep technical involvement. You simply need the right partner to provide a lightweight SDK that can be implemented within minutes, enabling a seamless “Ask AI” layer directly within your interface. Once integrated, it appears as a simple AI assistant on your platform, where users can ask questions in real time and receive accurate, context-aware responses based on your product’s data, similar to a ChatGPT-style experience but fully tailored to your ecosystem.

Here is an example in the image below showing how it can look on your platform.

The result is a more intuitive user journey, reduced confusion, and significantly improved engagement without adding complexity to your existing product.

From Basic to Advanced: How RAG Systems Evolve

Early-stage RAG systems are relatively simple, but production-grade systems involve advanced optimizations.

- Semantic Retrieval: Instead of matching keywords, systems understand the meaning behind queries.

- Intelligent Chunking: Documents are split into meaningful sections to preserve context.

- Reranking Mechanisms: Retrieved results are filtered to ensure only the most relevant data is used.

- Hybrid Search: Combining keyword and semantic search improves both precision and recall.

These enhancements are critical for scalable RAG model implementation.

Challenges You Should Be Aware Of

While RAG significantly improves AI performance, it also introduces a few important considerations that need to be managed carefully.

- RAG systems rely heavily on the quality of the underlying data. If the knowledge base is outdated, incomplete, or incorrect, the output will reflect those same issues.

- Since RAG involves an additional retrieval step before generating a response, it can slightly increase response time compared to traditional AI models.

- Implementing RAG requires additional components such as vector databases and retrieval systems, which can increase operational and maintenance costs.

- Large datasets must be optimized and structured effectively to fit within model context limits, important information may be lost.

- Despite these challenges, RAG delivers significantly higher accuracy and reliability, making it a strong choice for real-world and business-critical applications.

Despite these challenges, the benefits outweigh the trade-offs in most real-world scenarios.

If accuracy matters in your product, it’s time to rethink your AI architecture.

FAQs

Q: What is RAG in AI?

RAG in AI is a method that combines the retrieval of real-time data with AI-generated responses to improve accuracy.

Q: Why is RAG better than traditional AI?

RAG reduces hallucinations and provides more reliable answers by grounding responses in actual data.

Q: Where is RAG used?

RAG is used in customer support, healthcare, finance, and enterprise knowledge systems.

Final Thoughts

RAG is not just a technical improvement. It represents a fundamental shift in how AI systems operate and deliver value. It enables systems to provide answers that are not only fluent but also grounded in real, verifiable information by combining retrieval with generation. This moves AI from being a probabilistic responder to a system that can support real decisions, real users, and real business outcomes. Whether you are building a product, optimizing user experience, or exploring AI integration, understanding RAG is no longer optional. It is becoming a core layer for any intelligent system that aims to be trusted and scalable.

Because the next generation of AI will not just respond, it will retrieve, verify, and then respond. At Antier, we are helping businesses move beyond experimentation and build production-ready RAG-powered systems that drive real impact. If you are looking to integrate RAG into your platform or create an intelligent “Ask AI” layer, now is the time to act. Start building smarter AI experiences today!